How it works

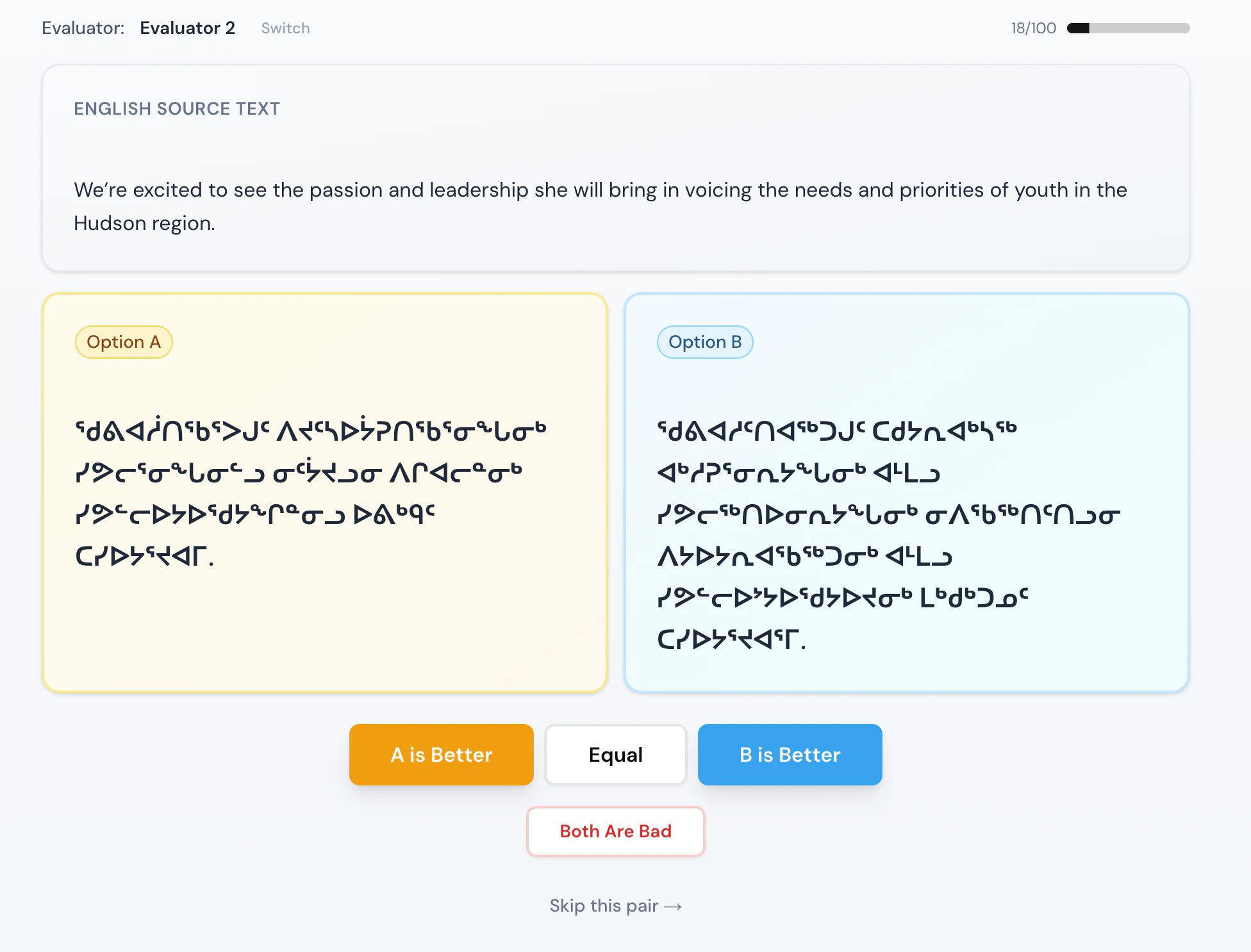

Each session presents an evaluator with a source sentence and two candidate translations, labeled A and B. They choose one of four options: A is Better, B is Better, Both are good, or Both Are Bad. System identities are never shown, which keeps brand preference out of the judgment. Evaluators log in with a name and PIN so results stay auditable.

Current standings

| Rank | System | Elo rating | Matches | Wins | Losses |

|---|---|---|---|---|---|

| 1 | Heritage Lab (JAN-20-M) | 1060 | 37 | 26 | 11 |

| 2 | Bing Translate | 1005 | 38 | 15 | 23 |

| 3 | Google Translate | 951 | 37 | 15 | 22 |

What’s next

Priorities for the coming months include getting all systems past the 50-match threshold, publishing dialect-specific results as volume grows, expanding the reference sentence set to cover more domains and registers, and feeding evaluator feedback directly into the next version of our translator.Get involved

If you are an Inuktitut speaker, language keeper, or expert translator, your input makes this more reliable and more representative. The more evaluators we have, the more confidence we can place in the results — and the more this stays in community hands.Leaderboard figures reflect the evaluation snapshot from April 15, 2026. The platform is continuously updated; consult the live leaderboard for current figures. Translation testing: Shaun Annanack, Siasie Ilisituk. Statistics and platform: Anissa Jean, Ali Mehdi.